I have created no less than 12 projects since my install. I have taken extreme care in taking photo's as to follow all the best practices. From here it has been a hit and miss, I have rerun multiple projects each time with different results although no setting change. Biggest issue is that some many images are not included, even though they look perfect. I have tried everything from setting targets down to taking fewer images (as few as 30 as many as 200), no matter what I do, all the images are never processed , typically half or a few more than that. I have ran into situations where the Sparse cloud looks ok, but when I do a dense cloud the software crops out everything but a square shape of the points, as well as other results that results in points all over the place, or the image shifted. I would like to upload some of these images if possible for some one else to try, The concerning thing is when I test image quality ALTHOUGH THESE IMAGES ARE CRYSTAL CLEAR, and at 24 MPixals I rarely get an image score above .08 unless I take a picture of a black and white checkerboard....come one. Anyone know what the image quality threshold is for the software ?

Testing Results

Collapse

X

-

Hi Gregchop,

if you can upload the images we would be glad to make some tests.

Have you tried to use masking? Since the background seems quite textured, it might lead to problems during camera orientations:

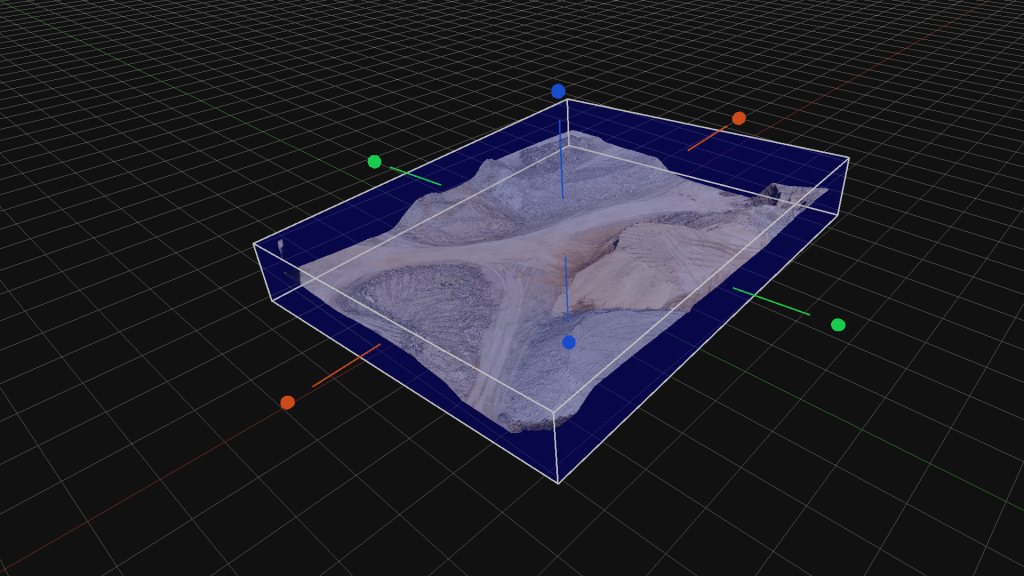

If the software is reconstructing only a small part, you should check the bounding box before running the dense point cloud creation:

In this recipe you will learn how to change the bounding box settings in 3DF Zephyr, a simple but important feature.

In this recipe you will learn how to change the bounding box settings in 3DF Zephyr, a simple but important feature.

Comment

-

Yes I have used the masking extensivily.....I will upload the IRON MAN project to dropbox, what email do you want me to give drop box access to. IRON Project has no masking, Jason Project is fully masked. I will upload both. In addition I will upload IRON on a roto table and offLast edited by Gregchop; 2018-02-25, 06:59 PM.

-

-

The image quality index should be viewed as an index relative index to the specific dataset. That photo is giving a low score because the background is out of focus and faces are quite featureless. The index reflects how much a photo is considered good for structure from motion algorithms. If you take a photo of a completely textured wall and it's all in focus, you will a very high score.

You can send the dataset directly at support@3dflow.net so that also I or one of my colleagues can download it tomorrow.Last edited by Andrea Alessi; 2018-12-11, 01:58 PM.Comment

-

I have uploaded the project via Drop box and added support@3dflow.net as read write, these files are very large, good luck figuring out whats up with this issue, has me pulling my hair out.

Just as a note the iron man project would only pull in the images from the front, I see that alot where the back cameras do not pull in.Last edited by Andrea Alessi; 2018-12-11, 01:59 PM.

-

-

This is another weird Artifact experience when I was creating the sparse points, Two point clouds came up, however when I moved onto the dense construction, one of them disappeared, weird thing is that large point cloud is the back of the model, that of which did not process in the latter steps1 PhotoComment

-

Hi Greg,

i can't download the dataset as it is shared to a dropbox user. I also emailed you so you have my email address.

Can you please send me the download link over there? If you prefer i can also open up a shared directory on our server for you.Comment

-

Hi Greg,

you're having issues with this dataset for a variety of reasons, but the main issue is the way the photos are shot (plus, the subject is quite difficult)

Here are a few tips:

lower your ISO speed and try using a tripod. You have a good camera, but running at ISO > 400 and 1/30 sec is often going to make blurred photos with a bad depth of field when shooting indoors. Your photos looks good only when you are looking them as thumbnails. Take the time to see them at their original size and you'll notice this. Here is an example of what i mean on your DSC_0216,JPG at their native resolution :

see how the background is blurred, and even the foreground is not as sharp as you expect from a Nikon. All your images suffer from this, so it's going to be an even more challenging dataset (not much texture, highlights) so you're starting with a very hard subject!

Since you're shooting indoors, you should use a tripod and a longer exposure time, while using a smaller aperture (you're shooting at f/3.5 on 1/30s with ISO1800 in this image). For example, i would use something around f/11 - ISO100 - 1sec and see how the image look. If it's still too dark, rather than increasing the ISO speed, increase the exposure time. If it's too blurred, use an even smaller aperture, like f/16.

If you have 10 minutes to spare, take a look at this short videotutorial we made about photography for photogrammetry https://www.youtube.com/watch?v=E06kgYBftak

An easier solution would be for you to try to take pictures outside on a cloudy - yet bright - day.

As for the set you sent, the second seems slightly better but lack masks. I assume you used that for your test, which is why you see back and front of the model in your reconstruction. Most likely, zephyr oriented the background. Always mask when you're rotating the object.

Try with easier subjects and move your way up to more challenging objects!

I'll try processing one of your dataset too see if they are salvageable but i wouldn't expect too much, in these cases it's better to simply take photos again.

I hope this helps, feel free to ask if you have any more issue!

1 PhotoComment

-

OK followed all your instructions...still not having luck in the quality of the reconstruction, Images are perfect and all get recognized....what now ? Please give me a link to your file share, as I will upload the latest, While I dont have pro version I did get my hands on the registration markers...but appears Lite does not allow the use...I am getting the most bizzar results....not even sure how to explain what I am seeing. To be honest masking that many images is a real pain, but I would call this for what it is, 3DF is not ready for prime time regarding close ups. I have added the NEW Ironman project to the drop box like before, its called IronMan 2Last edited by Gregchop; 2018-02-27, 09:44 PM.

-

-

Greg,

masking is only required if you do turntable setup. Markers are not required for this type of reconstruction.

I suggest you work more on easier subjects before attempting plastic toys, as they require more expertise, i assure you zephyr is more than ready for close ups

I will check your dataset as soon as i have time and email you how you can upload directly to us.Comment

-

So, I have to disagree with you on several statements, based on results...here goes

I captured the Ironman (I sent you) and tried without Registration markers and No masking...results were horrible

I then used those same sets with the registration but no masking, the results were a marked improvement

I then used the same sets with masking and registration (Keep in mind this was NOT a turn table) and the results were remarkable (Alot of work for a small project) While I understand your logic, having the registration in the image did improve the model due to increased finding of features, and the masking removed all the background noise (Fewer Calculations) . I also found for these type scans setting it to Human makes a nominal amount of improvement ( I assume the software deploys a slightly different algorithm due to smooth features humans have). So with that said, I will also say that every time I scanned, I got different results, which points to something buggy in the software. I also found placing fixed round printed targets on and around the subject further improves the scan giving the software more features to register from. I know in your view I am a novice a photogrametry, but in fact I worked with Twin Coast Metrology (Jason) for over a year in developing precision photogrametry that also includes a near field projector timed with the camera shutter that allowed better than .005 inch resolution / accuracy of the result point cloud (Base software was Geomagic) Having performed thousands of scans using a procilica camera, it was obvious to us registration points were KEY for near field scans. I like the soft GUI and think your team is on the right track, but you still have issues that will prevent true success where close objects are concerned (Have not tried Video or Far field) So far this is the best result I have been able to achieve with the same data I sent you.

-

-

Can you make the source imagery available to me?

Yes, I'm trustworthy. I've been with these 3Dflow guys for a long time. They're good people and they keep me around.

I can open a shared folder or if you've uploaded to their server, just give the go ahead and they'll share it with me.

I'd love to see the images and give my opinion.Comment

-

Greg,

i can assure you markers do not increase accuracy per se. You have most likely helped a bit the SfM algorithm but you could have used a newspaper or anything else under the subject.

Control points - which still you can't use in the lite version - on the other hand might help.

Glad to see you're learning how to improve your results

(Hey Scott! It's been a while! Loved the chip reconstruction!)👍 1Comment

-

The poker chip will be a perpetual work in progress!

(You really need to jump on the Facebook Group)Comment

Comment